The Arc Prize Foundation, a nonprofit co-founded by prominent AI researcher François Chollet, announced on Monday that it has developed a new, challenging test to measure the general intelligence of AI models.

The test, called ARC-AGI-2, has so far stumped most models.

AI models known for their reasoning capabilities, such as OpenAI’s o1-pro and DeepSeek’s R1, have scored between 1% and 1.3% on ARC-AGI-2, according to the Meanwhile, powerful non-reasoning models like GPT-4.5, Claude 3.7 Sonnet, and Gemini 2.0 Flash scored around 1%.

How ARC-AGI-2 Works

The ARC-AGI tests are designed as visual puzzles, requiring AI models to identify patterns in grids of colored squares and generate the correct “answer” grid. These problems force AI to adapt to novel challenges it hasn’t encountered before.

To establish a human baseline, the Arc Prize Foundation tested over 400 people on ARC-AGI-2. On average, humans correctly answered 60% of the test’s questions—far exceeding AI performance.

A More Accurate AGI Benchmark

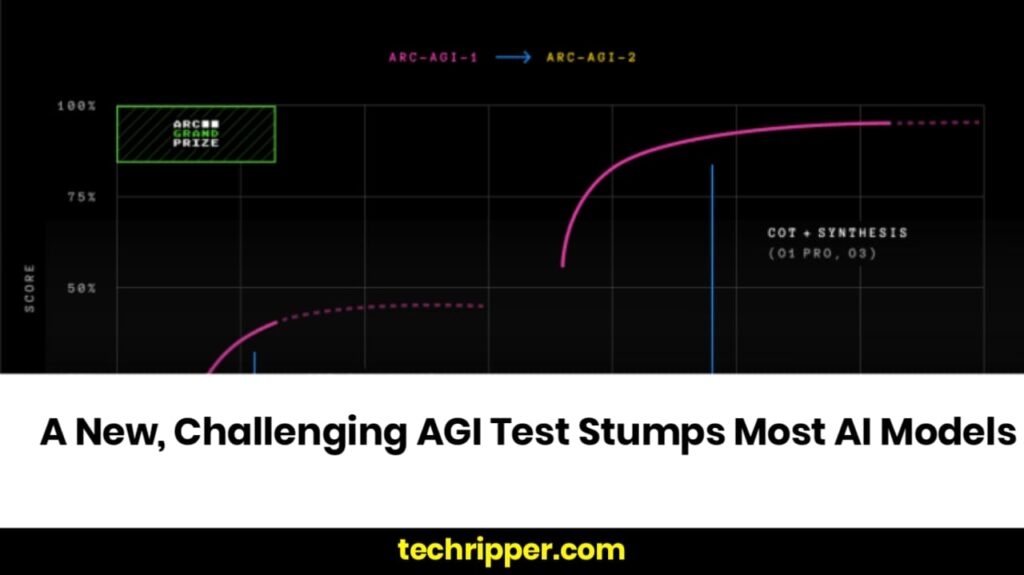

François Chollet stated in a that ARC-AGI-2 is a superior measure of AI’s true intelligence compared to its predecessor, ARC-AGI-1.

The new test prevents AI from relying on brute-force methods—which require massive computing power—to solve problems. ARC-AGI-1 had this flaw, as OpenAI’s o3 model used sheer computational strength to eventually surpass human performance in December 2024.

To fix these issues, ARC-AGI-2 introduces a new metric: efficiency. Instead of relying on memorization, models must interpret patterns on the fly.

“Intelligence is not solely defined by the ability to solve problems or achieve high scores. The efficiency with which those capabilities are acquired and deployed is a crucial, defining component.”

The Arc Prize 2025 Challenge

The launch of ARC-AGI-2 comes amid growing concerns in the AI industry that existing benchmarks fail to measure true artificial general intelligence (AGI).

Thomas Wolf, co-founder of Hugging Face, recently told TechCrunch that AI benchmarks are insufficient for evaluating key AGI traits, such as creativity and adaptability.

To push AI research forward, the Arc Prize Foundation announced a new Arc Prize 2025 contest, challenging AI developers to achieve 85% accuracy on ARC-AGI-2 while only spending $0.42 per task.

This challenge could become a milestone in AGI development, as researchers strive to create more efficient, adaptable AI systems.

Also Read : Wayve’s CEO Reveals Key Strategies for Scaling Autonomous Driving Technology